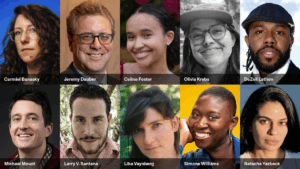

(L-R) Ezekiel Dixon-Román, Tiera Tanksley, Morgan Doctor, Emily M. Bender, Valerie Veatch, Kevin Veatch, Adam Becker, Alix Dunn, Nick Rodello and Keira Havens attend the Ghost in the Machine premiere during the 2026 Sundance Film Festival at The Yarrow Theatre on January 26, 2026 in Park City, Utah. (Photo by Neilson Barnard/Getty Images for Sundance Film Festival)

By Erik Adams

The scariest movie playing at the 2026 Sundance Film Festival isn’t about cursed audio recordings or a killer demon disguised as your true love; rather it’s a 110-minute essay film about how a handful of guys obsessed with numbers and quantifying human intelligence accidentally (or maybe intentionally) created the blueprints for a water-guzzling, pollutant-spewing, nonconsensual-nude-generating plagiarism device that’s easily manipulated by the worst people in the world and tenuously propping up an alarming percentage of the global economy.

If that description of the various technologies doing business as “artificial intelligence” raises your hackles, then you probably won’t enjoy Valerie Veatch’s Ghost in the Machine, which premieres in the Festival’s NEXT section. But if you’re interested in a new, engrossing, expertly edited way to push back the next time someone says, “Just look it up on ChatGPT” — well, Veatch has you covered.

“I’m so excited to share this film,” Veatch says in her introduction. “It’s an urgent piece, and it is from the heart.”

In the testimony of more than 30 experts — represented at The Yarrow Theatre by Adam Becker, Emily M. Bender, Ezekiel Dixon-Román, Alix Dunn, Keira Havens, Nick Rodello, and Tiera Tanksley — Ghost in the Machine traces the development of the technologies and disciplines that made today’s machine learning and text-to-image models possible. Simultaneously, it points out how the histories of personal computing, semiconductors, statistical mathematics, and so-called artificial intelligence are inextricably linked to notions of control, domination, and scientific racism. As one interviewee states, Silicon Valley likes to pretend it doesn’t have a history; the words and images of Veatch’s film suggest that’s because of all the skull-measuring skeletons in the tech world’s closets.

Early chapters of the documentary elaborate on the eugenicist and racist views of men who laid the groundwork for Silicon Valley. Dixon-Román explains how many of the models developed by Karl Pearson and other mathematical statisticians “emerged specifically out of eugenics”; some of those models are the foundations of today’s machine learning technologies. We see William Shockley, “the man who brought silicon to Silicon Valley,” proposing a “voluntary sterilization bonus plan” in a televised interview. Ghost in the Machine doesn’t catch them saying the quiet parts out loud — there were no quiet parts to begin with.

“There’s been article after article after article saying, ‘What happened to Silicon Valley? We thought that they were on the left, now they’re lining up behind Trump,’” Becker says during the post-premiere Q&A. “As we saw in the movie, the ethos of Silicon Valley — especially the Silicon Valley billionaires — has never really been on the left.”

But Ghost in the Machine isn’t an act of historical excavation; Pearson and Shockley’s bigotry are headings on their respective Wikipedia pages. The documentary’s function is to connect all of these dots and present them in a digestible fashion. It’s not unlike what chatbots like ChatGPT or Grok are supposed to do — it’s just that the information has been sifted through, analyzed, and assembled by a woman who exists and creates in the physical world.

One of the most potent conclusions Veatch draws is how, at this point, artificial intelligence is nothing more than a marketing tagline. Computer scientist John McCarthy is shown describing how he and his colleagues coined the term in the middle of the 20th century as a simplified way of explaining their work to potential bankrollers. AI was purely theoretical then, and it remains so now, and every empty promise of a forthcoming general artificial intelligence seen in Ghost in the Machine is just another sales pitch to investors.

In fact, the seeds for the documentary were planted by an effort to get the word out about an AI company’s latest innovation: Veatch was invited to test a new tool that “was gonna totally democratize filmmaking.”

“And the moment I started using this technology, it became very clear how problematic these systems are,” she says. “And as soon as I tried to ask this company about it, they were like, ‘Don’t ask these questions.’” Instead, she began asking those questions to people who would answer them.

Some generative AI images make their way into the finished product, illustrating the interviewees’ points and demonstrating an uncanny and increasingly sophisticated output. Motifs in archival footage are fed into the machine and come out the other end as funhouse-mirror reflections of themselves. Talk of drinking the AI Kool-Aid yields a knockoff Kool-Aid Man greeting adoring fans; the unopened water bottles sitting between tech bros on stage at fancy conferences get digital-mutant counterparts that double as commentary on all the water necessary to cool the massive supercomputers that make such images possible.

The more realistic generations raised a challenge for Veatch: In her documentary about the blurring lines between real and unreal, was it readily apparent what was real and what wasn’t?

“I thought it would be very obvious what was AI, what wasn’t, but it’s not,” she says. “So, I was on the phone with Keira one day and I was like, I’ll just put what’s not AI up in the corner and then, you know, label AI as well. And I think that that kind of takes on its own meaning.” That meaning is carried into the world by the “Not AI” pins handed out to audience members after the premiere.

Though the bulk of Ghost in the Machine is relatively doom-and-gloom about the amount of influence and wealth accumulated by the tech elite fueling the AI boom, a glimmer of optimism shines through. “One of the myths is that our future has already been determined, and we are helpless in it,” Tanksley says in the documentary. At the Yarrow, Bender advocates for the importance of asking why a task or responsibility needs to be automated, and the need for stronger regulations and labor protections to hold companies accountable for the damages caused by their products. And she stresses the human component of all of this.

“I think community and connection and really leaning into building local and then broader communities and valuing each other and valuing the humanity of each other and the humanity in systems is an important kind of resistance,” she says.